Building the Infrastructure Backbone for Autonomous Systems

We have a ways to go in developing the essential digital and physical infrastructure to accelerate autonomy. But we’ll get there.

“Jane, stop this crazy thing!” yells George Jetson to his wife, as they encounter another erratic failure from one of the many household gadgets in the year 2062. Through its three seasons, the producers of “The Jetsons” envisioned a family of four navigating a variety of edge cases that their robot dogs and maids could not handle. In the year 2024, we too encounter similar, albeit far fewer, imperfect robotic systems moving us through our daily lives. For instance, I took my very first ride in a Cruise back in summer 2021.1 While the autonomous taxi flawlessly navigated to our location, it faltered when it was unable to find a suitable place to drop us off. Soon the car was beginning a seemingly endless driving loop between our starting point and destination. Thankfully, we hit the conveniently located “emergency stop” button.

Were the creators of the Jetsons to ride in a driverless car today, we believe they would be satisfied with their overall autonomous ride (today, we regularly ride in Waymos around San Francisco that operate relatively seamlessly). But we also suspect they would be underwhelmed by the relatively limited ubiquity of autonomous and robotic systems in the rest of our lives. Despite the billions invested in robotics and autonomous systems, their adoption remains relatively limited, primarily concentrated in a few cities like San Francisco and specific industries such as warehouse management and manufacturing. There is no doubt that autonomous systems and robotics will ultimately transform the future of warfare, logistics, transportation, manufacturing and much more. But its pace has been slower than many experts (and TV producers) believed decades ago.

So, why haven’t autonomous systems and robotics yet lived up to their promise of revolutionizing transportation, logistics, warfare, and more? And what will it take to develop autonomous systems that can provide the US and its allies with a strategic edge in future conflicts and bolster the resiliency of critical industries like manufacturing and logistics? I asked my colleague Akhil Iyer, a Principal at Shield Capital, who has explored the future of autonomous systems both in the private sector and as a prior U.S. Marine, to co-author this post on the kinds of autonomy-enabling technologies that will accelerate the development of autonomous systems and get us one step closer to the world of the Jetsons!

Akhil and I argue that we currently lack the mature physical and digital infrastructure needed to address the edge cases and environmental challenges core to autonomous system development and deployment. However, we remain optimistic about the future of this space, driven by the operators and entrepreneurs working to overcome these barriers.

Limitation #1: Autonomy-enabling digital infrastructure

Let’s start with the digital world. Consider the world of web app development: developers have an extensive array of tools at their disposal, offering support for everything from coding assistance and test management to cloud infrastructure handling and beyond.

This is not yet the case with autonomous systems, whose developer ecosystem remains more nascent and fragmented. Many autonomous systems developers describe how they rebuild common tooling like testing and simulation tooling across every autonomous systems development organization where they worked. Others rely on legacy hardware and electronics design and testing tools that were not built for intelligent systems.2 Building new tooling or relying on adjacent legacy tools is slow and inefficient, making it difficult to adopt cutting edge technologies like re-simulation, where engineers rapidly incorporate live test results into further simulated tests.

Luckily an ecosystem for autonomy-enabling digital infrastructure is shaping up, driven in large part by the hard lessons of early autonomy pioneers and by the growing optimism that we will soon begin to see a world filled with autonomous systems.

After building custom stacks at autonomous driving companies like Cruise and Waymo, a number of startups have emerged to provide third-party developer tools for autonomous systems developers. Some of these founders had previously built similar tools in house at previous employers after failing to find a third-party solution. New startups like Pictorus and Collimator have emerged to replace Simulink (and other legacy software programming tools for hardware) with web-based, cloud-native low-code robotics programming tools that allow for collaboration, version control, and more modern programming languages (ex: Rust).

Companies from Elodin to PhysicsX have developed improved simulation tools for testing autonomous systems and robots, improving the speed of development for these systems. ReSim AI, for example, specifically designed its tools to accelerate internal simulation efforts as autonomous startups leverage more virtual testing before undertaking more costly further physical testing. Simulation tools have and will remain crucial for autonomous systems developers, enabling them to rapidly test their systems in a variety of simulated environments before further physical testing. This is not to suggest that simulation will replace physical testing, but expanding the simulation toolkit will further optimize physical testing for both engineers and the regulatory bodies charged with ensuring system viability and safety.

To be sure, autonomous system developers do have access to simulation environments like UNREAL engine, and many in the automotive space have leveraged Applied Intuition for their autonomous system development.3 Additionally, the U.S. Air Force has championed its AFSIM simulation framework for vendors seeking to test and iterate with its government customers. But many existing tools still require significant internal work, which does not contribute to the core autonomous system development. For instance, developers still need to build and manage the cloud infrastructure that runs parallel complex simulations.

Foxglove, Rerun, Model Prime, Nominal, and Sift all help autonomous systems developers collect, manage, visualize, and analyze real world test data. Ember Robotics helps robotics engineers monitor and diagnose problems with cost-effective off the shelf components like cameras, while Miru enables robotics engineers to manage and deploy software on their robotics fleets. Fid Labs is working to develop code generation tools for robotics engineers (starting with code generation for sensor fusion), and Code Metal AI’s code generation tools help autonomous systems engineers translate code written in high level coding languages like Python and MATLAB into efficient, edge-deployable code that can be run on size, weight, and power constrained (SWaP-C) hardware like autonomous drones.

Further, some startups are working to improve open source robotics software libraries, like ROS, the Robot Operating System,4 which increase the speed of autonomous systems development. ROS is the most popular library of open source robotics software that assists with robotic coordinate transition management, visual navigation capabilities, planning and execution of complex robotic arm movements, message-passing between processes, and package management. However, despite its popularity and functionality, ROS was developed more than a decade ago and is updated infrequently, so many of its libraries are not state-of-the-art. Some are working to upgrade legacy ROS libraries, like Cerulion, a robotics middleware startup that seeks to improve robotics communications by implementing hyper-fast communications protocols on top of ROS.

Adoption of these new, modern robotics development tools remains at an early stage, but these advancements will significantly accelerate the next generation of autonomous systems initiatives, as future developers will not be hampered by legacy tooling or the need to build tooling in house. And while new startups will seek to protect core IP in autonomous software, modeling and system refinement, these companies will look to others to provide the relatively undifferentiated work of building the design, testing, and validation infrastructure.

Limitation #2: Autonomy-enabling Physical Infrastructure

In addition to an underdeveloped developer software ecosystem, autonomous systems also face a number of physical constraints including supply chain issues, component costs, and access to physical testing sites. Increasingly, these constraints apply to both defense and domestic applications of autonomous systems.

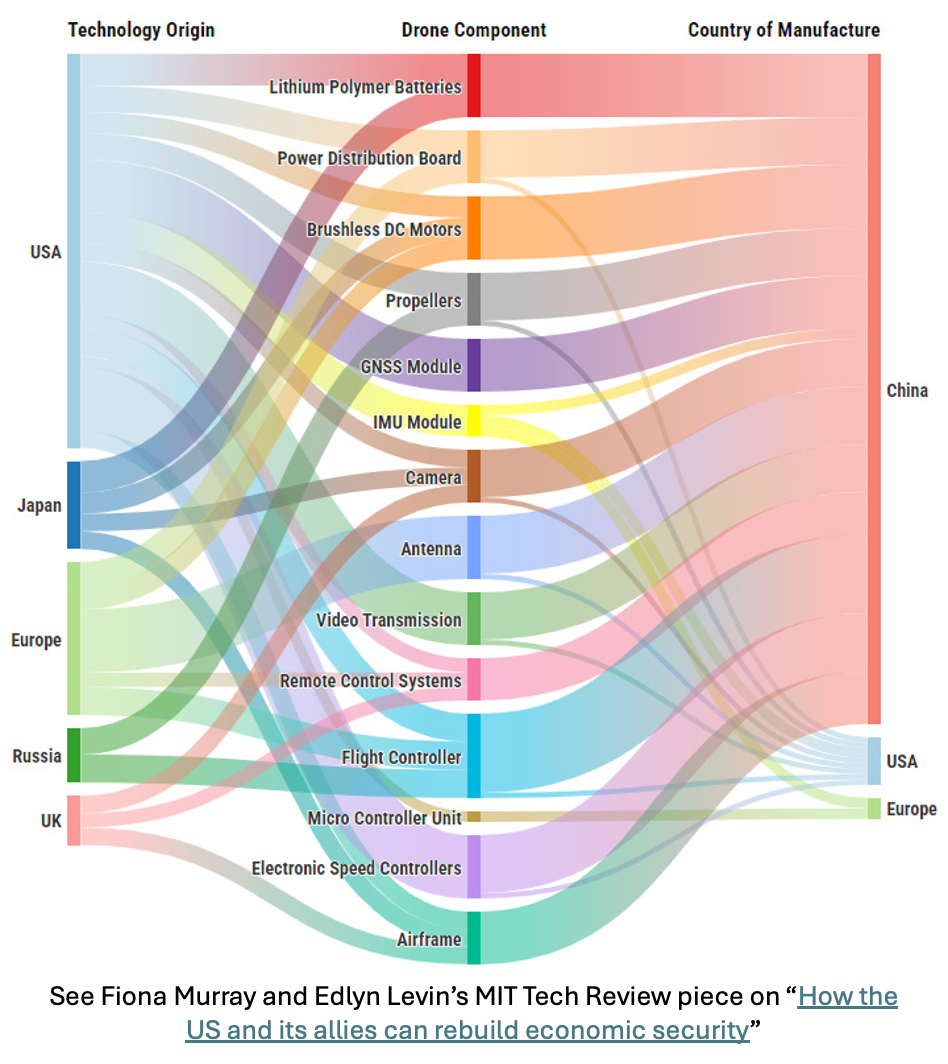

First, U.S. manufacturers, especially in the small drone industry, remain outmatched by China. DJI’s prowess reflects this massive supply chain advantage that China has accrued over the past several decades. By leveraging commercial off-the-shelf advantages provided by Shenzhen’s manufacturing infrastructure, DJI has been able to build small drones with a bill of materials of less than $200. The U.S. cannot match this. And despite recent policy efforts to curtail the use of Chinese-made drones domestically, the U.S. has a ways to catch up. In order to do so, the U.S. needs to establish reliable supply chains for critical sub-components, from datalinks to laser5 rangefinders to propulsion systems. Substantial effort is required to rebuild the necessary industrial base, but that is better covered elsewhere (see previous blog post on building in America).6

Another pressing physical challenge for drones and other autonomous system providers is gaining access to the realistic physical testing spaces. Regulatory bodies like the FAA play a crucial role in ensuring safety, though historically, they've faced criticism for being overly restrictive. These types of constraints have led companies like Zipline to amass flight hours out of the country in places like Africa, where regulations are less strict, before ultimately gaining approvals to test and finally operationalize in the U.S.

Constraints are further exacerbated in the military context. As highlighted in a Wall Street Journal article earlier this year, U.S. drone providers have struggled to operate in Ukraine due to electronic warfare (EW) and other environmental stresses of wartime operation such as resilient data links and resilient navigation. The inability of U.S. drones to operate effectively in a contested electromagnetic environment can in part be traced back to the lack of widely available domestic stress testing.

While much can be done inside anechoic chambers, many startups face the dilemma of either waiting to access domestic military test ranges with simulated electronic interference, or sending their drones and employees to Ukraine to incorporate feedback from front line experience. More recently, we’ve seen a handful of more established companies and newer startups consider providing testing-as-a-service for others building military-grade autonomous systems. There are also examples of U.S. government efforts to enable physical testing of novel systems on its military ranges, such as the Air Force’s Agility Prime initiative that enabled Electric Vertical Takeoff and Landing (EVTOL) companies like Joby and Archer to leverage military airspace without following the same stringent FAA requirements. Going forward, government leaders could hyperscale their efforts to support similar testing efforts for startup and incumbent autonomous system providers.

A final element to tackling the physical environment includes navigating without GPS.7 Militaries are no longer the only groups concerned with navigation in contested environments where GPS availability is not guaranteed. The use of EW in eastern Europe and elsewhere has affected commercial and government aviation operations alike. Aerial vehicles in particular struggle to navigate without GPS. This continued trend has spawned a number of startups building technology for autonomous systems operating in EW and GPS-denied environments. Startups like Theseus, Tera AI, Vermeer, and AstraNav are all developing technology that enable navigation without GPS. There are several different technological approaches to GPS-denied navigation – for example, Theseus uses computer vision and accelerometer/gyroscope whereas AstraNav’s technology is able to navigate by tracking the changes in Earth’s magnetic field.

Limitation #3: Path to true autonomous “swarms”

Before diving into Silicon Valley’s favorite topic of generative AI, it’s worth noting that many unmanned systems and robots deployed to date are not truly autonomous (instead they are remotely controlled or follow pre-programmed routes). Furthermore, even fewer systems exhibit autonomous swarming capabilities. The dazzling aerial drone shows witnessed at events like the Super Bowl are entirely pre-programmed; they are not dynamic swarms working together to intelligently make decisions in response to their environments. But as individual systems demonstrate higher levels of autonomous functionality, the ability to transition to multi-platform autonomous behavior comes closer to reality.

In speaking with some of the leading researchers in multi-robot orchestration, the keys to realizing this swarming future hinge on both technical and human factors.

First, swarm developers must ensure that each additional system in the group does not significantly slow the decision-making process of the entire swarm. To handle swarming at scale, researchers must optimize algorithms to ensure each node in a drone swarm remains computationally efficient. Professor Mac Shwager and Stanford’s Multi-Robot Systems Lab have published fascinating research addressing the challenges of distributed optimization. If future autonomous system developers distill the geospatial information required for each drone to efficiently compute and decide on subsequent actions, we will see autonomous systems exhibit the same level of dynamic swarming as some bird species.

“Swarming” birds (in a phenomenon known as murmuration) are continuously communicating visually and vocally, which brings us to the next related issue: networking. The amount of data each neighboring (or commanding) node in a swarm needs will determine the scale and cost of each networking solution. Several known and widely used providers, like Silvus and Trellisware, supply high end networking solutions costing several thousand dollars – outside the price point of cheaper drone providers. The other end of the (cost) spectrum includes systems like Somewear Labs and protocols like LoRa, however these systems come with a tradeoff — they have lower throughput than more expensive alternatives. Ultimately, a broader set of networking options is likely required to accelerate autonomous systems' swarmed use.

The final component enabling swarming is the level of human comfort with daily use of these systems. The trials and tribulations of autonomous taxis in San Francisco, along with ongoing debates about the use of autonomous systems in warfare, highlight that societal adoption of autonomy hinges on crucial regulatory and ethical considerations.8 Managing human comfort with autonomous systems is particularly pressing in the military context, where the pace of autonomous swarm deployments may also hinge upon the architectures needed to ensure meaningful human control.

So what about generative AI for autonomy?

As I’ve written about in past posts, there is significant promise for large generative AI foundation models to lead to a massive improvement in autonomous systems and robotics performance. Historically, the ML models used in autonomous systems were brittle – failing in novel scenarios that were not present in training data (ex: a computer vision model may struggle to recognize an object in the sunlight vs. in the shade). However, foundation models applied to autonomous systems have the potential to enable these systems to generalize and perform effectively in new environments and tasks that were not explicitly included in training data.

Over the past couple years, autonomous systems researchers have published a number of papers9 outlining how “Vision Language Models” (VLMs) and “Vision Language Action Models” (VLAs) can be applied to autonomous systems. VLA models incorporate vision and language data to generate robotics control policies that task a robot with a particular action. Researchers train robotics foundation models on large multimodal datasets that include natural language text descriptions of a task combined with a series of image frames (normally RGB but sometimes with depth or LiDAR) of a robot carrying out that task combined with the current state of the robotic joints and sensor readings. In a similar vein, researchers are developing specialized foundation models for autonomous vehicles based on several modalities including text, audio, video, LiDAR point clouds, and vehicle control signals to execute autonomous vehicle tasks including perception, visual understanding, and planning.

To build these foundation models for robotics and autonomous vehicles, researchers will need access to large robotics and autonomous vehicle data sets which do not exist today. The promise of large foundation models is that by training these models on very large, diverse data sets, they exhibit emergent capabilities, where models are able to perform well at tasks they were not explicitly trained on (ex: a robot would be able to pick up a strawberry, even if only trained on data related to picking up blueberries). Eventual effectiveness of foundation models for autonomous systems is still up for debate,10 but we are starting to see evidence from researchers like Chelsea Finn, Vincent Vanhoucke, and those at Google DeepMind that scaling laws may apply to autonomous systems.

Unlike vision and language foundation models like GPT-4 and DALL-E 3, it is difficult to collect data sets to train autonomous systems foundation models. To train these models, researchers need large multimodal datasets that include natural language text descriptions of a task combined with a series of image frames (normally RGB but sometimes with depth or LiDAR) of an autonomous system carrying out that task combined with the state of the autonomous system’s joints, sensor readings, and control signals. One way researchers are collecting this data is by manually teleoperating autonomous systems and collecting data while conducting a variety of teleoperated tasks. In fact, several startups like Sensei Robotics are developing platforms to help robotics companies outsource teleoperated robotics data collection. Other autonomous systems developers, like Tesla, have deployed non-autonomous versions of their products to consumers, and are collecting data at scale from human drivers as they manually operate systems. Similarly, Cruise and Waymo have deployed autonomous vehicles in limited environments (in specific cities like San Francisco and Phoenix they have manually mapped out), and are collecting data from their systems deployed in the real world.

Some research suggests that a foundation model for autonomous systems may not actually require large amounts of sensor and controls data (which is hard to collect at scale). For instance, Google’s recent RT-2 model shows that researchers may be able to train a large model on internet-scale vision and language data and then fine tune that model on a smaller set of autonomous systems data. This kind of fine-tuning approach could also help unlock foundation models for a variety of autonomous systems configurations. Part of the challenge of developing robotics foundation models is that robotics come in many shapes and sizes including robotic arms, ground vehicles, maritime vehicles, aerial vehicles, quadrupeds, and more. Perhaps researchers will find a way to develop a general purpose foundation model for autonomous systems that is easy to fine tune on specific data for specific hardware configurations – maritime, ground, or otherwise.

While foundation models capable of fully controlling autonomous systems may still be out of reach, researchers are focusing on developing models for more specialized functions within the autonomous systems stack, particularly in areas like perception. There are several startups emerging building large foundation models that understand the physical world (for example, see Fei-Fei Li’s new startup World Labs which recently raised $230M). These models could serve as perception engines in autonomous systems, helping them understand the physical world to make decisions. In order to build these models, startups are developing technologies that enable them to efficiently scrape websites like YouTube for high-quality video data to train perception algorithms.11

Tomorrow’s autonomous future

Despite the challenges in building autonomy we highlighted, the good news for those building the Jetsons-like future is it’s becoming easier. The emergence of better robotics developer tools, resilient navigation and communications, physical supply chains, and foundation models will accelerate the development and adoption of autonomous systems, leading to a more productive, efficient, and safer world.

As always, please reach out if you or anyone you know is building autonomous systems or autonomy-enabling products at the intersection of national security and commercial technologies. Please let us know your thoughts – I know this is a quickly changing technology space and welcome any and all feedback. What other technologies are needed to accelerate autonomous systems development? How else can the US DoD support autonomous systems testing and development?

At the time, Cruise was only operating after 9PM in a small part of San Francisco.

The primary hardware and electronics design and testing tools used today (like those developed by Cadence, MathWorks, Altium, and Siemens) are decades old, rely on legacy code, and have clunky user interfaces (UIs). Further, most of these design tools do not allow for effective collaboration and version control. For instance, popular low-code robotics programming software Simulink (developed by MathWorks) currently generates legacy C/C++ code, does not allow for effective collaboration, and has limited 3D simulation capability.

Note: ROS is not really an operating system.

There are there are no NDAA approved small size weight and power (SWaP) lasers on the market currently — which is a major disadvantage for western drones vs. DJI. Without a laser rangefinder - it’s *really* hard to geo-locate something at a distance with any level of precision - which has major tactical implications.

Take a look at Anduril’s recent manufacturing initiatives and the recent discussion about Taiwan’s role in US drone supply chains as other examples of recent efforts to rebuild this base.

Note: GPS-denied navigation is more or less solved for ground-based vehicles.

It is worth reading the late Dr. Ash Carter’s piece on “AI-Assisted Decision-making,” in which he clarifies the intent of his original DoD Directive on Autonomous Weapons: “Note that the directive does not use the language ‘man in the loop.’ The language of the directive was crafted to suggest that the DOD would insist upon other more practical forms of “human judgment” built into its AI-enabled weapons systems.”

We highly recommend “Will Scaling Solve Robotics” by Nisanth Kumar as an excellent overview of the debate surrounding whether scaling data sets and foundation models for robotics will advance the field of robotics and whether it is possible to build robotics foundation models.

It’s not advisable to just train a perception model on all Internet video data – much of the video data available online is complete garbage. Further, there is simply too much Internet data to efficiently train on – petabytes of data are uploaded to YouTube each day. So, researchers will need to sort high quality data from a massive amount of low quality videos, ensuring that models are trained on only relevant data.

Note: The opinions and views expressed in this article are solely our own and do not reflect the views, policies, or position of my employer or any other organization or individual with which we are affiliated.

Well thought through! Nice one! 👏